Brief description

We present a novel method that, given a sequence of synchronized views of a human hand, recovers its 3D position, orientation and full articulation parameters. The adopted hand model is based on properly selected and assembled 3D geometric primitives. Hypothesized configurations/poses of the hand model are projected to different camera views and image features such as edge maps and hand silhouettes are computed. An objective function is then used to quantify the discrepancy between the predicted and the actual, observed features. The recovery of the 3D hand pose amounts to estimating the parameters that minimize this objective function which is performed using Particle Swarm Optimization. All the basic components of the method (feature extraction, objective function evaluation, optimization process) are inherently parallel. Thus, a GPU-based implementation achieves a speedup of two orders of magnitude over the case of CPU processing. Extensive experimental results demonstrate qualitatively and quantitatively that accurate 3D pose recovery of a hand can be achieved robustly at a rate that greatly outperforms the current state of the art. You might also be interested in having a look at our more work on efficient model-based 3D tracking of hand articulations using Kinect (BMVC’2011) where instead of exploiting 2D visual cues extracted by a multicamera setup, we employ 2D and 3D visual cues resulting from a Kinect (RGB-D) sensor. A more recent extension considers tracking the articulated motion of two strongly interacting hands (CVPR 2012). You might also be interested in having a look at our more recent work on full DOF tracking of a hand interacting with an object by modeling occlusions and physical constraints (ICCV'2011) where we do not only seek for the optimal hand model that explains the available hand observations alone, but rather for the joint hand-object model that best explains both the available hand/object observations and the occlusions.

Sample results

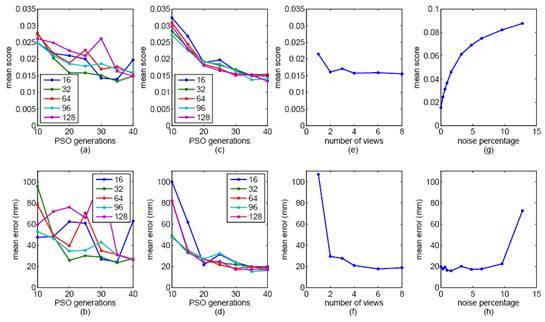

Performance of the proposed method for different values of selected parameters. In the plots of the top row, the vertical axis represents the mean value E of the optimization function. In the plots of the bottom row, the vertical axis represents mean error in mm (see paper for additional details). (a), (b): Varying values of PSO particles and generations for 2 views. (c), (d): Same as (a),(b) but for 8 views. (e),(f): Increasing number of views. (g), (h): Increasing amounts of noise.

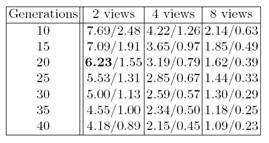

Number of multiframes per second processed for a number of PSO generations and camera views for 16/128 particles per generation. The entry in boldface corresponds to 20 generations, 16 particles per generation and 2 views. This setup corresponds to the best trade-off between accuracy of results, computational performance and system complexity. This figure shows that the proposed method is capable of accurately and efficiently recovering the 3D pose of a hand observed from a stereo camera configuration at 6.2Hz. If 8 cameras are employed, the method delivers poses at a rate of 1.6Hz.

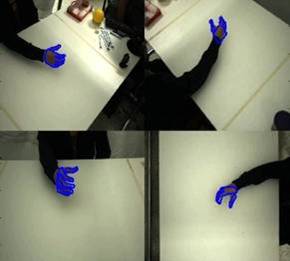

Download video with 3D hand pose estimation results. The blue contours in the above snapshot (right) and the accompanying video are the projections of the 3D hand model (left) as this has been estimated by the proposed method for these sequences.

Contributors

- Iasonas Oikonomidis, Nikolaos Kyriazis, Antonis Argyros.

- This work was partially supported by the IST-FP7-IP-215821 project GRASP.

Relevant publications

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Markerless and Efficient 26-DOF Hand Pose Recovery”, in Proceedings of the 10th Asian Conference on Computer Vision, ACCV’2010, Part III LNCS 6494, pp. 744–757, Queenstown, New Zealand, Nov. 8-12, 2010.

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Full DOF tracking of a hand interacting with an object by modeling occlusions and physical constraints”, in Proceedings of the 13th IEEE International Conference on Computer Vision, ICCV’2011, Barcelona, Spain, Nov. 6-13, 2011.

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Efficient model-based 3D tracking of hand articulations using Kinect”, in Proceedings of the 22nd British Machine Vision Conference, BMVC’2011, University of Dundee, UK, Aug. 29-Sep. 1, 2011.

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Tracking the articulated motion of two strongly interacting hands”, to appear in the Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2012, Rhode Island, USA, June 18-20, 2012.

- N. Kyriazis, I. Oikonomidis, A.A. Argyros, “A GPU-powered computational framework for efficient 3D model-based vision”, Technical Report TR420, Jul. 2011, ICS-FORTH, 2011.

The electronic versions of the above publications can be downloaded from my publications page.