Brief description

We present a model-based, top-down solution to the problem of tracking the 3D position, orientation and full articulation of the human body from markerless visual observations obtained by two synchronized RGBD cameras. Inspired by recent advances to the problem of model-based hand tracking, we treat human body tracking as an optimization problem that is solved using stochastic optimization techniques. We show that the proposed approach outperforms in accuracy state of the art methods that rely on a single RGBD camera. Thus, for applications that require increased accuracy and can afford the extra complexity introduced by the second sensor, the proposed approach constitutes a viable solution to the problem of markerless human motion tracking. Our findings are supported by an extensive quantitative evaluation of the method that has been performed on a publicly available data set that is annotated with ground truth.

Method overview

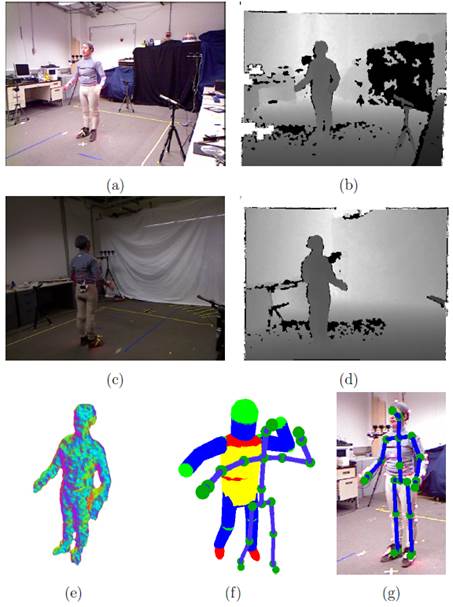

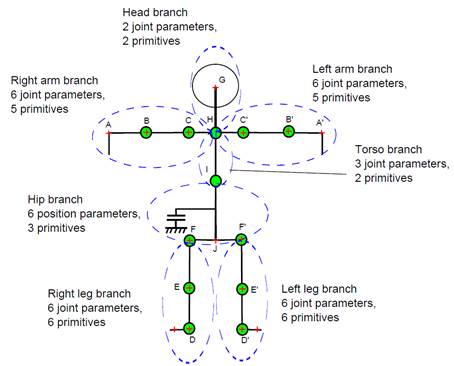

Left: Graphical illustration of the proposed method. Two RGB frames ((a), (c)) and the corresponding depth maps ((b), (d)). The volume (e) occupied by the person is reconstructed using the depth maps. The proposed method fits the employed human body model (f) to this volume, recovering the body articulation (g). Right: Definition of human body branches. The perturbation of the torso and hip branches results in changes in the parameters of their child branches. Model points with a red “+" denote joints whose 3D position is taken into account in defining the tracking error in the quantitative experimental evaluation of the method.

Sample results

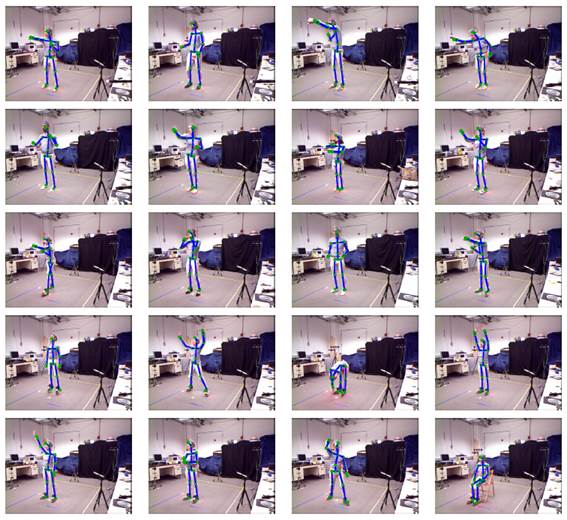

Qualitative results on human motion tracking on the Berkeley MHAD dataset

Various configurations on different subjects evaluated by the method.

Contributors

- Damien Michel, Costas Pannagiotakis, Antonis Argyros.

Relevant publications

- D. Michel, Costas Panagiotakis, A.A. Argyros, “Tracking the articulated motion of the human body with two RGBD cameras”, in Machine Vision Applications journal.

The electronic versions of the above publications can be downloaded from my publications page.