Brief description

There is currently an abundance of vision algorithms which, provided with a sequence of images that have been acquired from sufficiently close successive 3D locations, are capable of determining the relative positions of the viewpoints from which the images have been captured. However, very few of these algorithms can cope with unordered image sets. We present an efficient method for recovering the position and orientation parameters corresponding to the viewpoints of a set of panoramic images for which no a priori order information is available, along with certain structure information regarding the imaged environment. The proposed approach operates sequentially, employing the Levenshtein distance to deduce the spatial proximity of image viewpoints and thus determine the order in which images should be processed. The Levenshtein distance also provides matches between imaged points, from which their corresponding environment points can be reconstructed. Image matching with the aid of the Levenshtein distance forms the crux of an iterative process that alternates between image localization from multiple reconstructed points and point reconstruction from multiple image projections, until all views have been localized. Periodic refinement of the reconstruction with the aid of bundle adjustment, distributes the reconstruction error among images. The approach is demonstrated on several unordered sets of panoramic images obtained in indoor environments.

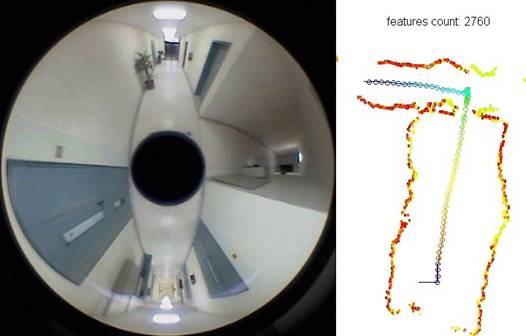

Sample results

Download relevant video: Example of the application of the proposed method on the problem of simultaneous robot mapping and localization. Note that the order of images is not provided to the algorithm, but inferred by the proposed algorithm itself.

Contributors

Damien Michel, Antonis Argyros, Manolis Lourakis

Relevant publications

- D. Michel, A.A. Argyros and M.I.A. Lourakis, "Localizing Unordered Panoramic Images Using the Levenshtein Distance", Proc. of 7th workshop on Omnidirectional Vision, Camera Networks and Non-classical Cameras (OMNIVIS'2007), in conjunction with ICCV’07, Rio de Janeiro, Brazil, 2007

- D. Michel, A.A. Argyros and M.I.A. Lourakis, "Horizon matching for localizing unordered panoramic images", Computer Vision and Image Understanding, Elsevier, vol. 114, no. 2, pp. 274-285, 2010

The electronic versions of the above publications can be downloaded from my publications page.