Brief description

We present a method for the real-time estimation of the full 3D pose of one or more human hands using a single commodity RGB camera. Recent work in the area has displayed impressive progress using RGBD input. However, since the introduction of RGBD sensors, there has been little progress for the case of monocular color input. We capitalize on the latest advancements of deep learning, combining them with the power of generative hand pose estimation techniques to achieve real-time monocular 3D hand pose estimation in unrestricted scenarios. More specifically, given an RGB image and the relevant camera calibration information, we employ a state-of-the-art detector to localize hands. Given a crop of a hand in the image, we run the pretrained network of OpenPose for hands to estimate the 2D location of hand joints. Finally, non-linear least-squares minimization fits a 3D model of the hand to the estimated 2D joint positions, recovering the 3D hand pose. Extensive experimental results provide comparison to the state of the art as well as qualitative assessment of the method in the wild.

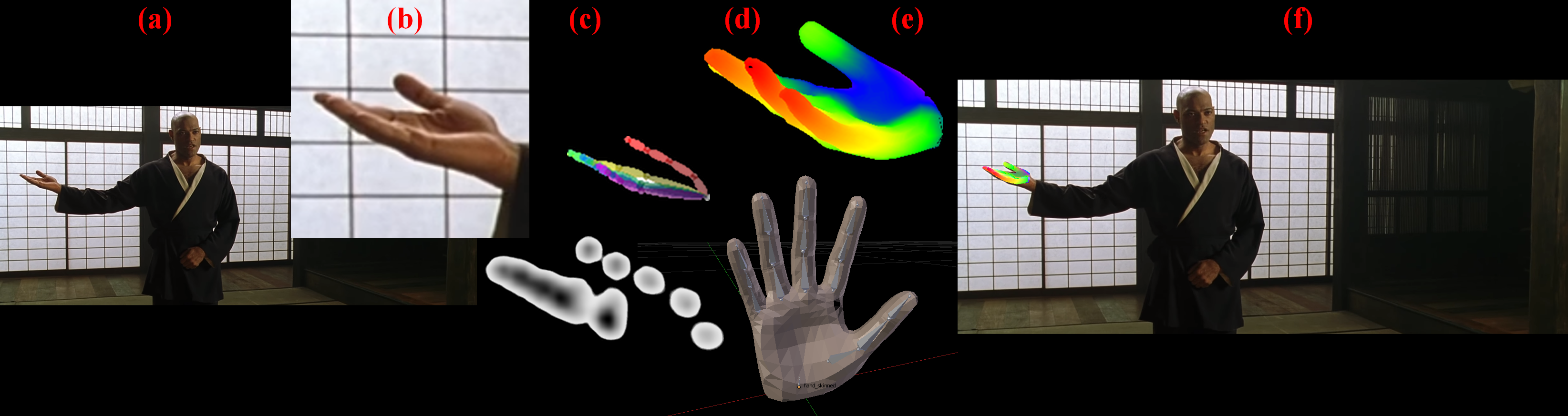

Method overview

Graphical illustration of the key steps of the proposed method. The method operates on a single RGB view (a). The hand is detected and a cropped image containing it (b) is provided to OpenPose that is responsible to estimate the 2D locations of hand joints (c). A hand model (d) is then transformed so that the distances of the projections of its joints to their observation counterparts are minimized (e). Figure (f) shows the final solution (e) superimposed to the input image (a).

Sample results

Download library

Download from Github repository.

Contributors

- Paschalis Panteleris, Iason Oikonomidis, Antonis A. Argyros,

- This work has been supported by the EU projects Co4Robots and WEARHAP.

Relevant publications

- P. Panteleris, I. Oikonomidis and A.A. Argyros, "Using a single RGB frame for real time 3D hand pose estimation in the wild", In IEEE Winter Conference on Applications of Computer Vision (WACV 2018). Also available at arxiv. Lake Tahoe, NV, USA, March 2018.

The electronic versions of the above publications can be downloaded from my publications page.