Brief description

This work is an extension of previous work on biologically inspired reactive robot navigation. The centering behavior is achieved through perceptual information acquired though panoramic vision, as opposed to a complex trinocular vision system.

In its continuous attempt to build intelligent artificial creatures, robotics has often been inspired by nature. Particularly interesting is the remarkable variety of light-sensing structures and information processing strategies occurring in animal visual systems. The physiology of these systems appears to have been influenced, through evolution, by the ecological niche and lifestyle of each animal species. Insects such as bees, ants and flies have become a particularly appealing source of inspiration, because of the remarkable navigational capabilities they display, despite their relatively restricted neural system. This, apparently, forced them to develop solutions to navigation tasks, which are ingenious in their simplicity and robust in their implementation, both of which are invaluable characteristics for robotic systems. The navigation task examined in this work is the centering behavior, which consists in moving in the middle of a corridor-like environment. Bees are able to accomplish similar tasks by exploiting three features of their visuo-motor system: the wide field of view of their eyes, their ability to estimate retinal motion and a control mechanism which reorients their flight so that retinal motion in the two eyes remains balanced. Inspired by this biological solution, we attempt to create a reactive, vision-based centering behavior for a mobile robot equipped with a panoramic camera providing a 360o visual field and a sensor-based control law, where optical flow information from several distinct “looking” directions in the entire field of view of the panoramic camera is used directly in the control loop. No reconstruction of the robot’s state is attempted; the information extracted from the sensory data is not sufficient for this. It is however sufficient for the proposed control law to accomplish the desired task. The use of a panoramic camera, as opposed to that of a multi-camera setup or of a mechanism that reorients the gaze of a typical perspective camera, simplifies the processing of the sensory information and reduces the complexity of the required hardware. A detailed theoretical analysis, followed by extensive experimentation, demonstrates the effectiveness of this biologically-motivated approach.

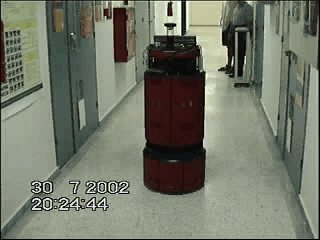

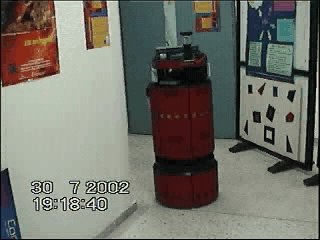

Sample results

Download video. Example robot centering behavior.

Download video. Another example of biomimetic centering behavior. In this example, the translational velocity of the robot is varying, as a consequence of the varying width of the corridor.

Download video. Centering in a right-angled corridor (observers’ view)

Download video. Centering in a right-angled corridor (panoramic view)

Contributors

Antonis Argyros, Dimitris Tsakiris, Cedric Groyer.

Relevant publications

- A.A. Argyros, D.P. Tsakiris, C. Groyer “Bio-mimetic Centering Behavior for Robotic Systems with Panoramic Sensors”, IEEE Robotics and Automation Magazine, Special Issue on Panoramic Robotics, pp. 21-30, December 2004.

- A.A. Argyros, P. Georgiades, P. Trahanias, D. Tsakiris “Semi-autonomous Navigation of a Robotic Wheelchair”, Journal of Intelligent and Robotic Systems, Special Issue on “Medical and Rehabilitation Robotics, Kluwer Academic Publishers, Vol. 34, pp. 315–329, July 2002.

- D. Tsakiris, A.A. Argyros, “Corridor Following by Nonholonomic Mobile Robots Equipped with Panoramic Cameras”, in proceedings of the IEEE Mediterranean Conference on Control and Automation 2000 (MED’00), invited session in “Sensor-based Control for Robotic Systems”, Patras, Greece, July 2000.

The electronic versions of the above publications can be downloaded from my publications page.