Brief description

We present a fast and accurate 3D hand tracking method which relies on RGB-D data. The Due to occlusions, the estimation of the full pose of a human hand interacting with an object is much more challenging than pose recovery of a hand observed in isolation. In this work we formulate an optimization problem whose solution is the 26-DOF hand pose together with the pose and model parameters of the manipulated object. Optimization seeks for the joint hand-object model that (a) best explains the incompleteness of observations resulting from occlusions due to hand-object interaction and (b) is physically plausible in the sense that the hand does not share the same physical space with the object. The proposed method is the first that solves efficiently the continuous, full-DOF, joint hand-object tracking problem based solely on camera input. Additionally, it is the first to demonstrate how hand-object interaction can be exploited as a context that facilitates hand pose estimation, instead of being considered as a complicating factor. Extensive quantitative and qualitative experiments with simulated and real world image sequences as well as a comparative evaluation with a state-of-the-art method for pose estimation of isolated hands, support the above findings.

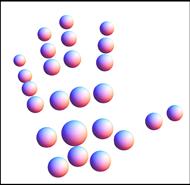

Graphical illustration of the employed 26-DOF 3D hand model, consisting of 37 geometric primitives and the 25 spheres constituting the hand’s collision model.

In this work we extend our earlier approach for markerless and efficient 26-DOF hand pose recovery (ACCV 2010) by considering jointly the hand and the manipulated object. PEHI was a generative, multiview method for 3D hand pose recovery. In each of the acquired views, reference features are computed based on skin color and edge. A 26-DOF 3D hand model was adopted. For a given hand configuration, skin and edge feature maps are rendered and compared directly to the respective observations. The discrepancy of a given 3D hand pose to the observations is quantified by an objective function that is minimized through Particle Swarm Optimization (PSO). The whole approach was implemented efficiently on a GPU. In the current, new approach (HOPE), we do not only seek for the optimal hand model that explains the available hand observations but rather the joint hand-object model that best explains both the available hand/object observations and the occlusions. Additionally, the objective function penalizes hand-object penetration, seeking for a physically plausible solution. It is demonstrated that the aforementioned constraints are very useful towards an accurate solution to this more complex and interesting problem.

- You might also be interested in having a look at our work on efficient model-based 3D tracking of hand articulations using Kinect (BMVC’2011) where instead of exploiting 2D visual cues extracted by a multicamera setup, we employ 2D and 3D visual cues resulting from a Kinect (RGB-D) sensor. A more recent extension considers tracking the articulated motion of two strongly interacting hands (CVPR 2012).

Sample results

Mean error D for hand pose estimation (in mm) for HOPE (left) and PEHI (right) for different PSO parameters and number of views. (a),(b): Varying PSO particles and generations for 2 views. (c),(d): Same as (a),(b) for 8 views. (e): Increasing number of views, 40 generations, 64 particles/generation.

Contributors

- Iasonas Oikonomidis, Nikolaos Kyriazis, Antonis A. Argyros.

- This work was partially supported by the IST-FP7-IP-215821 project GRASP.

Relevant publications

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Full DOF tracking of a hand interacting with an object by modeling occlusions and physical constraints”, in Proceedings of the 13th IEEE International Conference on Computer Vision, ICCV’2011, Barcelona, Spain, Nov. 6-13, 2011.

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Markerless and Efficient 26-DOF Hand Pose Recovery”, in Proceedings of the 10th Asian Conference on Computer Vision, ACCV’2010, Part III LNCS 6494, pp. 744–757, Queenstown, New Zealand, Nov. 8-12, 2010.

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Efficient model-based 3D tracking of hand articulations using Kinect”, in Proceedings of the 22nd British Machine Vision Conference, BMVC’2011, University of Dundee, UK, Aug. 29-Sep. 1, 2011.

- I. Oikonomidis, N. Kyriazis and A.A. Argyros, “Tracking the articulated motion of two strongly interacting hands”, in Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2012, Rhode Island, USA, June 18-20, 2012.

- N. Kyriazis, I. Oikonomidis, A.A. Argyros, “A GPU-powered computational framework for efficient 3D model-based vision”, Technical Report TR420, Jul. 2011, ICS-FORTH, 2011.

The electronic versions of the above publications can be downloaded from my publications page.